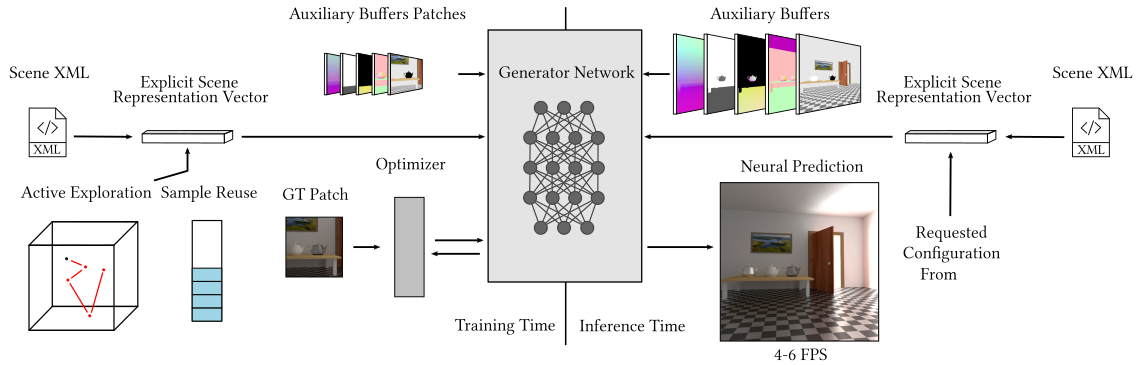

Overview

Overview of our method. At training time we operate on patches of G-Buffers and we use a Pixel Generator to generate the neural rendering. Our active exploration finds compositions of the scene where the Generator is struggling and explores similar samples. Our explicit scene representation vector compactly represents the variability of the scene to the Generator and defines the space D of possible scene configurations. Our sample reuse stochastically chooses to render a new ground truth or to reuse a stored sample. During inference, the Pixel Generator takes as input G-Buffers and the current state of the scene through the explicit scene representation vector and generates an image with global illumination at interactive rates.