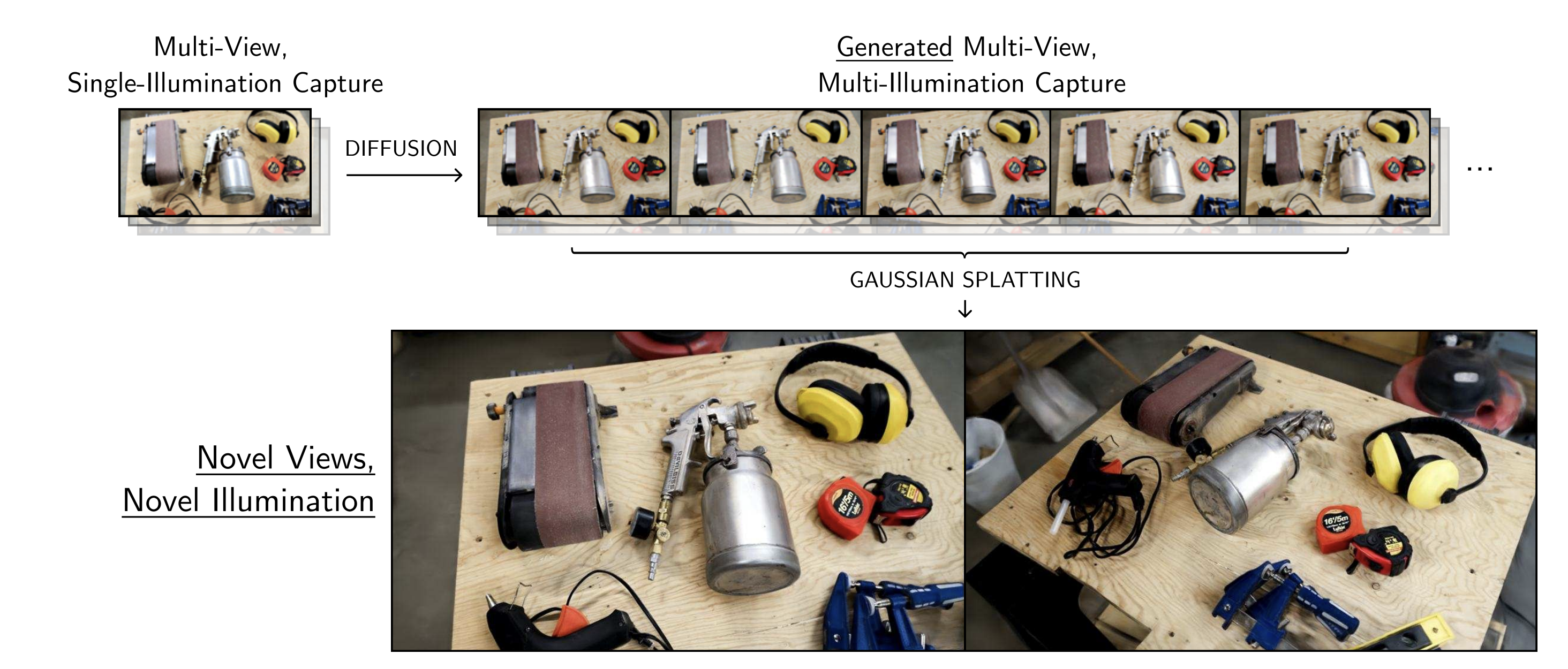

Our method produces relightable radiance fields directly from single-illumination multi-view dataset, by using priors from generative data in the place of an actual multi-illumination capture. It is composed of three main parts. First, we create a 2D relighting neural network with direct control of lighting direction. Second, we use this network to transform a multi-view capture with single lighting into a virtual multi-lighting capture. Finally, we create a relightable radiance field that accounts for inaccuracies in the synthesized relit input images and provides a multi-view consistent lighting solution.

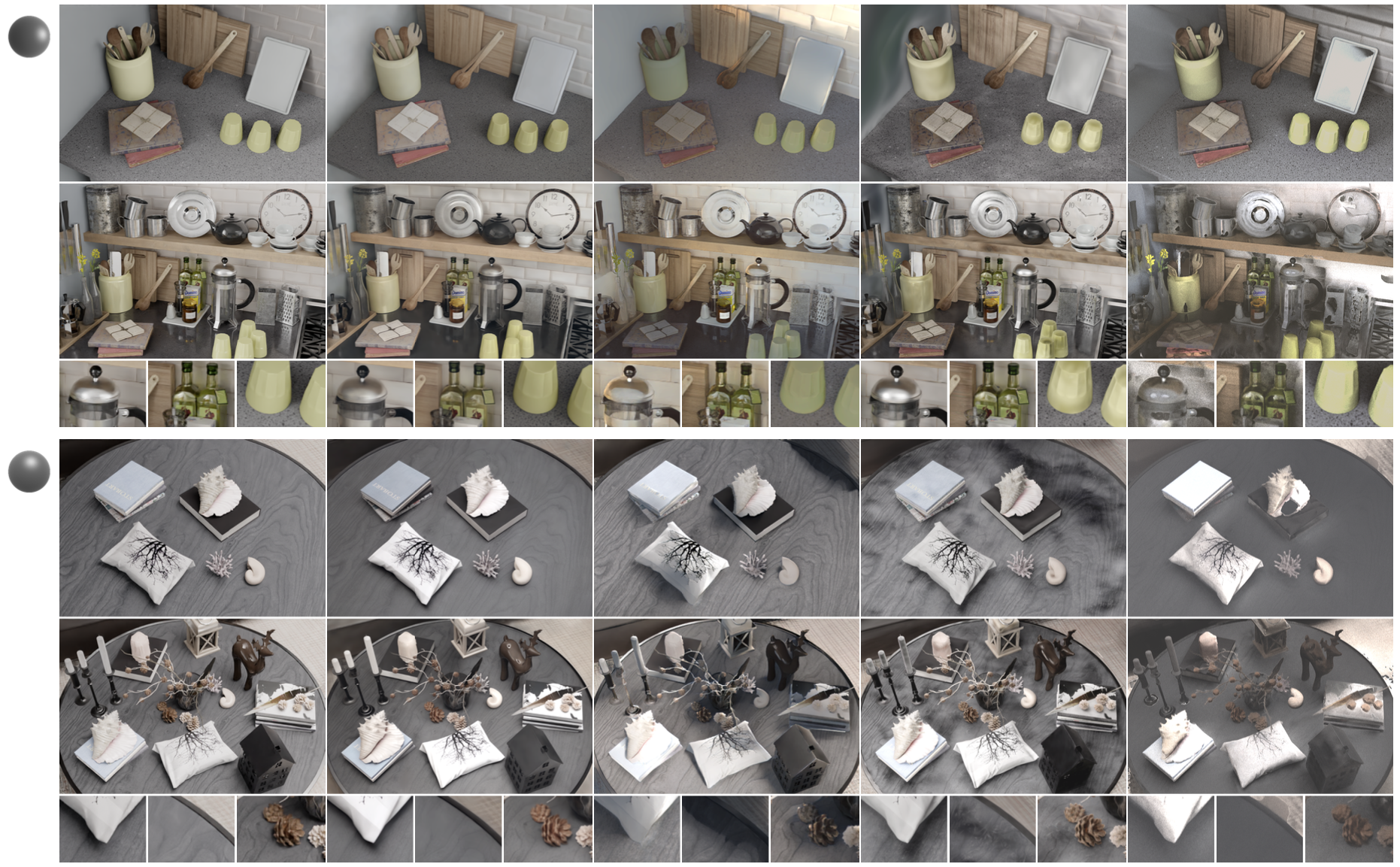

Since it does not rely on accurate geometry and surface normals, as compared to many prior works our method is better at handling cluttered scenes with complex geometry and reflective BRDFs. We compare to Outcast, Relightable 3D Gaussians, and TensoIR.

View more results

View more results

Radiance fields like 3DGS rely on multi-view consistency, and breaking it introduces additional floaters and holes in surfaces.

To allow the neural network to account for this inconsistency and correct accordingly, we optimize a per-image auxiliary latent vector:

Additionally, during training all gaussian primitives that project within the view frustum of a camera but are located in front of its znear plane are culled. This pruning process, inspired by Floaters No More, removes most of the floaters:

@article{

10.1111:cgf.15147,

journal = {Computer Graphics Forum},

title = {{A Diffusion Approach to Radiance Field Relighting using Multi-Illumination Synthesis}},

author = {Poirier-Ginter, Yohan and Gauthier, Alban and Philip, Julien and Lalonde, Jean-François and Drettakis, George},

year = {2024},

publisher = {The Eurographics Association and John Wiley & Sons Ltd.},

ISSN = {1467-8659},

DOI = {10.1111/cgf.15147}

}

This research was funded by the ERC Advanced grant FUNGRAPH No 788065, supported by NSERC grant DGPIN 2020-04799 and the Digital Research Alliance Canada. The authors are grateful to Adobe and NVIDIA for generous donations, and the OPAL infrastructure from Université Côte d’Azur. Thanks to Georgios Kopanas and Frédéric Fortier-Chouinard for helpful advice.